Biology as Model

How does artificial intelligence work? How reliable can it be? And what is it anyway? A conversation with Robin Hiesinger and Christoph Benzmüller

Jul 21, 2021

Humans versus machines: Human intelligence has no chance against today’s computer programs.

Image Credit: Pexels / Pavel Danilyuk

Robin Hiesinger, a professor of neurogenetics, investigates the fruit fly to study how the brain is wired up. Christoph Benzmüller, a professor of computer science, researches artificial intelligence (AI) and gives lectures on the ethics of AI. Both scientists want to find out how intelligent neural networks are created, in the brain and in computers. Both are based at Freie Universität Berlin.

Professor Benzmüller, Professor Hiesinger, how would you describe artificial intelligence?

BENZMÜLLER: Above all, AI can solve specific problems better than humans, for example, comparing thousands of X-rays to find the smallest tumors, or automatic face recognition. Those are examples of “weak AI.” I am particularly interested in “strong AI,” which aims to surpass all human abilities through artificial systems. We are a long way from that.

HIESINGER: The pioneers of AI had in mind machines that could do something for which a human being would need intelligence. So they defined intelligence with a different intelligence!

Neurogeneticist Robin Hiesinger is using fruit flies to study how the brain is wired up.

Image Credit: Bernd Wannenmacher

That leads to the question: What actually is intelligence?

HIESINGER: As a neurobiologist, I find it difficult to define, and I am not alone in that. When we talk to a person or to a machine, we can judge how intelligent it sounds. In fact, there are already machines that talk in such a way that people are fooled. So you could say that if AI manages to dazzle, then that is a form of intelligence. But the intelligence of a bee is something completely different.

BENZMÜLLER: I think strong AI needs five levels. First, problem solving, in the sense of weak AI. Second, exploring the unknown such as the Mars rover, which has to perform certain tasks in a strange environment. Third, rational and abstract thinking. Fourth, self-reflection, i.e., finding explanations for failed attempts and questioning the results. Fifth, social interaction, i.e., establishing a correlation with personal goals and the values of society. At the moment, the development of AI is at the first two levels.

Do you understand how people can be afraid of machines that could be smarter than they are?

BENZMÜLLER: Yes, of course. That is why AI systems should not be used in critical areas such as the control of large energy networks, automated financial markets, or weapons technology without reflection mechanisms. They should never be used too quickly and without accompanying control mechanisms.

It starts with autonomous driving. Many people would be reluctant to sit in such a vehicle.

HIESINGER: But they do not hesitate to get into a taxi! And nobody knows the mental state of the cab driver, whether he or she is overtired or distracted because they are thinking about their children or are in the middle of a personal crisis. It is astonishing how flawed our brains are and how flawed our memories are – and how little we as neurobiologists understand about it.

Can a car decide whether an accident is more likely to kill the child on the road or its passengers?

HIESINGER: Whether we want to let an artificial neural network decide such things is really a good question. We leave such decisions to people every day.

BENZMÜLLER: I agree. We are working on architectures that are intended to find answers for security-critical areas. Just as with human intelligence, they need two levels of action: on the one hand, the intuitive one, which quickly and efficiently decides on the best option, and on the other hand, the ability to reflect on and control the action. That does not lead to ethical machines, but at least to pseudo-ethical ones because they reflect the values that we give them.

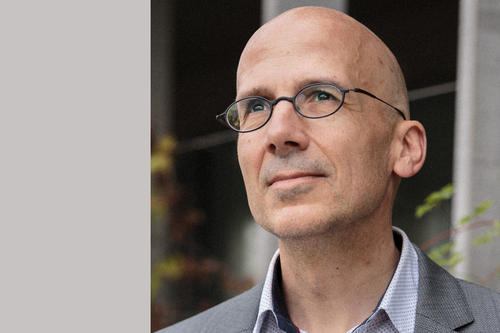

Computer scientist Christoph Benzmüller conducts research on artificial intelligence and the ethics of artificial intelligence.

Image Credit: Max Power

What is the main difference between a biological brain and an artificial neural network?

HIESINGER: Artificial neural networks are designed. Before they are turned on, they can do absolutely nothing. They have to learn, and that takes a great deal of time and energy.

The human brain does not rely on databases and does not perform mathematical operations. “Data” enter the network through sensory stimuli and propagate through the network like a wave. And somehow these sensory data change thousands or even millions of synaptic connections.

But before the human brain can learn, something happens that is entirely left out of current AI approaches: based on a genome, the brain grows. Some structures are added, and others are dismantled, especially during development. Babies are not born with a randomly wired brain, then “switched on” to learn. They are already born with a form of intelligence, even if they cannot immediately solve differential equations.

BENZMÜLLER: There have already been attempts to mathematically simulate a genome, and there are also approaches to dynamically adapt or learn the topologies of neural networks. Many different things are conceivable.

There is an important aspect that should not be overlooked in current AI research: Developing increasingly complex, power-hungry AI technologies is not ecologically justifiable. That is why I am also interested in the integration of data-driven and rule-based AI, since it reduces the hunger for AI data and also enables better abstract explanations.

HIESINGER (with a laugh):The entire history of AI has been an attempt to avoid biological details in an effort to create something that so far only exists in biology: by building a neural network without the many molecules that are necessary in our nerve cells so that they can communicate. The result is something that just contains the mathematical representation of the essence of it, strengthening or weakening the transmission between two neurons.

My hypothesis is that this is not enough. In order to achieve human intelligence, you need the whole process of growing and learning in the human brain. There is no shortcut!

Researchers in your two disciplines can obviously learn a lot from each other, but they practically never meet. Robin Hiesinger wrote a book, The Self-Assembling Brain: How Neural Networks Grow Smarter, in which he lets fictional protagonists of your two disciplines enter into a dialogue. Would that also be possible in real life?

BENZMÜLLER: That is difficult at conferences because we do not yet have a common technical language. But we should integrate interdisciplinary events in degree programs in order to train generations of researchers who are networked and who ask the crucial questions, also in ethics committees.

Catarina Pietschmann conducted the interview.

This text originally appeared in German on July 3, 2021, in the Tagesspiegel newspaper supplement published by Freie Universität Berlin.

Further Information

- Professor Christoph Benzmüller, Freie Universität Berlin, Institute of Computer Science, Email: c.benzmueller@fu-berlin.de

- Professor Robin Hiesinger, Freie Universität Berlin, Institute of Biology, Email: p.rh@fu-berlin.de